AI adoption has moved past the “should we?” phase. Most organizations have already experimented, and many have deployed tools broadly. Yet across industries, a familiar pattern is emerging: AI pilots stall, get shut down, or plateau long before they become a sustainable capability.

When that happens, the instinct is often to blame the model, the prompts, or the tooling. In practice, the root cause is usually more basic: AI is being introduced faster than governance, data trust, and operational ownership can keep up.

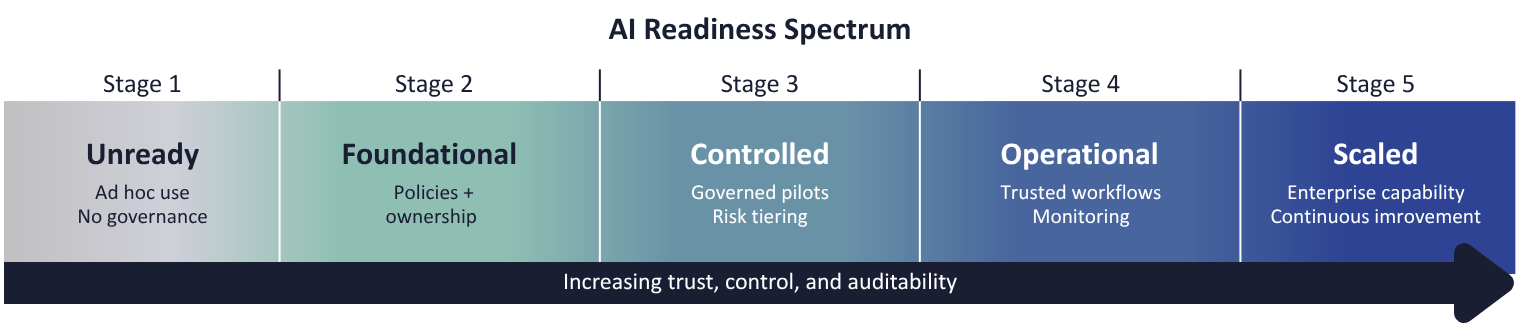

Over time, the picture becomes clear. Organizations don’t struggle because AI doesn’t work—they struggle because readiness varies. One useful way to understand that variation is through the Eliassen Group's Proprietary AI Readiness Spectrum, which reflects how prepared an organization is to adopt and scale AI responsibly.

This spectrum isn’t a scorecard. It’s a lens for answering two questions leaders are already asking:

- Where are we today, really?

- What’s the next responsible step forward?

A Practical Maturity Curve for Safe AI Adoption

Organizations tend to cluster into five recognizable stages. Most aren’t “behind”, they are simply trying to skip stages.

Stage 1. Unready: AI curiosity, no foundation

AI use is informal and often unsanctioned. Public tools and shadow AI are common, but ownership, policies, and data quality controls are unclear. Risk accumulates quietly.

Outcome: Pilots stall or get shut down.

What’s missing: Basic controls and clarity.

Stage 2. Foundational: Governance begins

Leadership acknowledges both the opportunity and the risk. Initial AI principles are defined, use cases are inventoried, and data governance starts to take shape, often unevenly.

Outcome: Limited pilots with heavy oversight.

What’s missing: Consistency and trust in data.

Stage 3. Controlled: AI is carefully allowed

This is the inflection point. Governance frameworks emerge, use cases are risk‑tiered, and data lineage and monitoring improve. AI starts delivering value but only in narrow, defensible scenarios.

Outcome: Early wins without major exposure.

What’s missing: Confidence to scale.

Stage 4. Operational: AI is trusted in workflows

AI becomes part of daily work. Ownership is clear, decisions are auditable, and teams are trained on appropriate use. Monitoring shifts from reactive to continuous.

Outcome: Measurable efficiency and risk reduction.

What’s missing: A path to scale without friction.

Stage 5. Scaled: AI as a core capability

AI is no longer a project, it’s an enterprise muscle. Strategy, governance, and execution reinforce one another, allowing new use cases to be onboarded quickly and responsibly.

Outcome: Sustainable advantage with controlled risk.

What’s required: Continuous improvement, not complacency.

The Most Common Misstep: Trying to Jump Stages

The most consistent failure pattern we see is organizations trying to leap from experimentation straight to advanced deployment.

The more reliable approach is simpler and slower by design:

Move one stage at a time. Build governance and data trust first so pilots can scale without increasing risk.

AI readiness isn’t about how advanced your models are. It’s about whether your organization can answer basic operational questions:

- Who owns the outcome when AI is wrong?

- Which decisions are low‑risk vs. high‑impact?

- Can results be explained, audited, and defended?

- Do teams trust the data behind the output?

Until those answers exist, scale will remain fragile.

What “Getting Ready” Actually Looks Like

Contrary to popular belief, AI readiness rarely requires a massive replatforming effort or new tooling. The earliest and most effective work usually focuses on:

- where decisions actually occur,

- what data those decisions rely on,

- and what controls are needed before automation expands.

Organizations that make steady progress tend to:

- Clarify current AI usage and decision points

- Assess data trust (quality, ownership, lineage)

- Define governance as an operating model, not a document

- Build a roadmap around low‑risk, high‑value use cases

Notice what’s absent: buying more tools.

The EG AI Anthology: Perspectives Across the AI Readiness Journey

AI readiness isn’t a single discipline. It spans business strategy, data quality, governance, risk, and operational adoption.

To explore these dimensions more deeply, the EG AI Anthology brings together recent perspectives examining AI from multiple angles, including:

Raising AI the Right Way: Building a Business Case with Ambition—and Realism

5 Take Aways - Beyond the Hype Practical AI Use Cases

From AI Ambition to AI Results: How Data Quality Unlocks the Strategy Everyone Is Missing

Rewiring for AI: Eliassen Group's Leap from Adoption to Innovation

Auditing and Governing Shadow AI

The Importance of AI Governance: Turning Innovation into Sustainable Value

For readers navigating different stages of the readiness spectrum, these pieces offer complementary insights and practical considerations regardless of where an organization is starting.

It turns abstract conversations about “AI maturity” into concrete next steps.

A Final Thought

AI readiness isn’t a finish line, it’s a moving target as models evolve, regulations change, and new use cases emerge.

Organizations that make real progress aren’t chasing the most advanced technology. They’re deliberately building trust, ownership, and governance alongside capability—advancing one level at a time.

That’s what turns AI momentum into results that last.

Author

Bill Gienke

VP and Principal, Business Advisory Solutions

https://www.linkedin.com/in/billgienke/